Climb the Ladder!#

Our class moves quickly! Sometimes, it feels like we make leaps in logic that are a bit too big. In this ladder challenge, we’ll learn some core math concepts, some linear algebra, and the numpy library. Problems in this notebook start out easy and progressively get harder, so that the next rung of the Python ladder is always within reach.

Additionally, since not all of the topics discussed in this ladder challenge are explicitly taught in our course, these problems come with many more hints, tips, suggestions, and even sometimes a mini-lesson. You are encouraged to Google frequently throughout the lesson. In many ways, this ladder is meant to be a challenge as well as educational in its own right.

Remember our one rule: NO LOOPS! None of the exercises in this notebook require a loop. If you use a loop to solve any of these problems, you are solving the problem incorrectly.

Import numpy in the usual way

import numpy as np

Section V: All the Matrix Algebra in the World#

This final section is going to be a little bit different. We are aware than many students come to DSI from a wide variety of backgrounds, and many students have very little experience with linear algebra. However, data science is an intensely mathematical subject, and most of that math is linear algebra.

This section is going to teach you some of the linear algebra topics we need while also giving you hands-on examples of how it might be carried out in raw numpy.

Part 1: Vector Norms#

While vectors are often drawn as arrows, really they are simply points on a coordinate plane. They can be denoted many ways using mathematical notation, but which you use depends on what field of study you are in, or whichever is most convenient to your situation. That is, the following are all the same vector:

Where the middle one (the “column vector”) is often the most convenient when doing matrix algebra, so that is what we will use.

The norm of a vector is the distance from that vector to the origin (that is, \((0, 0)\)). It is denoted and computed as follows:

For the next few problems, we’ll use the following vectors:

v = np.load("data/v.npy")

w = np.load("data/w.npy")

Compute the vector norm of the vector \(\mathbf{v}\) using the matrix algebra we’ve learned so far in

numpy.

Compute the vector norm of the vector \(\mathbf{v}\) using a built-in

numpyfunction.

Hint:

np.linalg.something()

The distance between two vectors is given by the norm of their difference. That is,

Compute the distance between \(\mathbf{v}\) and \(\mathbf{w}\)

There are actually many kinds of distances. The one I’ve shown you so far is the most common, and it goes by many names, usually Euclidean distance or \(\mathcal{l}_2\) distance. There is also an \(\mathcal{l}_1\) distance, sometimes called Taxi cab distance or Manhattan distance:

Can you figure out why it gets these names?

Compute the Manhattan distance between \(\mathbf{v}\) and \(\mathbf{w}\).

Hint: You can actually use the same function from numbers 69 and 70!

Part 2: Linear Regression#

The first type of machine learning model (aka “statistical model”) we’ll learn in our course is the ordinary least squares linear regression model (usually just linear regression or OLS for short).

In linear regression, we believe that our (quantitative) \(y\) variable depends on some linear relationship of our \(x\) variables. That is, if we have 3 \(x\)s, for observation \(i\),

We often like to incorporate all of our data into this equation by writing this formula in matrix notation, which is actually much more concise:

Where

and the data matrix (or design matrix) is:

Using a paper and pencil, multiply out \(\mathbf{y} = \mathbf{X}\beta\) as denoted above. Verify to yourself that this makes sense, and that it matches the first equation above (for \(p = 3\)). Really, take the time to do this! Being comfortable with equations like these is an import concept in our course.

We load some data below. However, it doesn’t include that column of ones that corresponds to the \(y\)-intercept (\(\beta_0\)). Create a matrix \(\mathbf{X}\) by adding the column of ones.

Hint: Use

np.hstack()

X = np.load("data/X.npy")

y = np.load("data/y.npy")

What are the dimensions of \(\mathbf{X}\)? How many observations are in this data set, and how many variables? What should be the size of \(\beta\)?

The goal of OLS is to estimate \(\beta\). When we estimate a value, we like to put a “hat” on it. The estimate of \(\beta\) is then \(\hat{\beta}\), and our predictions for our observations are \(\hat{\mathbf{y}}\). OLS is one of the rare opportunities where we can computer \(\hat{\beta}\) directly. It has the following formula:

Compute \(\hat{\beta}\). Save it as

beta_hat.

Compute our predictions. That is, \(\mathbf{\hat{y}} = \mathbf{X}\hat{\beta}\). Save this as

y_hat.

A variant on OLS is called ridge regression, where, for some number \(\lambda\), we instead compute

where \(I\) is the approprate identity matrix.

Compute \(\hat{\beta}^\text{ridge}\) for \(\lambda = 50\). Call this

beta_hat_ridge.

Compute the predictions for this model, \(\hat{\mathbf{y}}^\text{ridge}\). Call this

y_hat_ridge.

Compute \(\|\hat{\beta}\|\) and \(\|\hat{\beta}^\text{ridge}\|\). Which is smaller?

Part 3: MSE#

Computing \(\hat{\beta}\) involves minimizing the MSE. We can now also write the MSE in terms of matrix algebra:

Take a paper and pencil and verify that all three of these things are equivalent.

Compute the \(MSE\) using the first equation. (You already did this in an earlier exercise).

Compute the \(MSE\) using the second equation. That is, using matrix multiplication.

Compute the \(MSE\) using the third equation. That is, using

np.linalg.norm().

Write a function that takes in

yandy_hatand computes the \(MSE\).

Compute the \(MSE\) for both the OLS and ridge regression predictions in the previous part. Which model has a higher MSE?

** Bonus: ** Part 4: Singular Value Decomposition ** Not Required **#

There are many kinds of matrix decomposition. Matrix decomposition involves taking a matrix, and breaking it into the product of other matrices that are easier to work with. Any real-valued matrix \(M\) can be broken up into its singular value decomposition:

where:

\(U\) and \(V\) are orthonormal matrices (more on this soon). The columns of \(U\) and \(V\) are referred to as the left and right singular vectors, respectively.

\(S\) is a diagonal matrix, where the elements of the diagonal are the singular values in descending order.

That is, \(S\) looks like:

There are A LOT of confusing definitions here, so let’s dive into them individually.

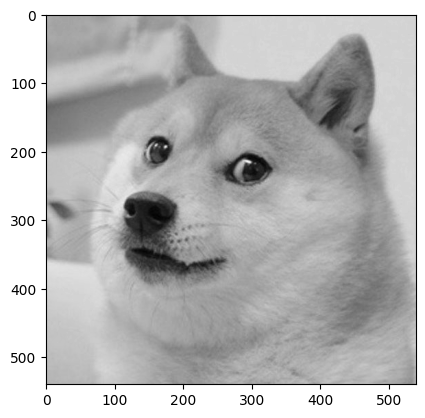

Below, we read in a matrix of pixel values that make up a pleasant image. We can use plt.imshow() to plot a matrix visually.

import matplotlib.pyplot as plt

doge = np.load("data/doge.npy")[:, 200:740]

print(doge.shape)

plt.imshow(doge, cmap="gray");

(540, 540)

Use the

np.linalg.svd()function to decompose ourdogematrix. Please skim the documentation of this function! Note that it returns \(V^T\), and not \(V\). It also returns a vector of the singular values, not the full \(S\) matrix.

The singular values are non-negative numbers in descending order. An easy way to think about them is that they represent how much information we can represent in our matrix using a linear transformation. In the special case of a square matrix \(M\), the singular values are also eigenvalues. (Similarly, the left singular vectors are the corresponding eigenvectors).

We recognize this still probably doesn’t make a whole lot of sense. By the end of this exercise, we can interpret them visually, and hopefully that will clear things up.

Use our vector of singular values to make an

smatmatrix corresponding to \(S\) as shown above.

Hint: Use

np.diag().

The matrices \(U\) and \(V\) are orthonormal. This means they have the following properties:

Verify the above using Python. Note that you might get precision errors (that is,

2.77e-17instead of0).

Why do we do this at all?! For SVD, we seek to approximate large, complex matrices. To see what we mean, let’s see what happens when we remove singular values from our matrix.

Create a new matrix, \(\tilde{S}\), that is the same as \(S\), but with all but the first 20 singular values turned into zeros. You may call this variable

smat2.

Let’s approximate our original

dogematrix. That is, recombine our decomposed matrix, but this time having removed most of our singular values:

You can call the result doge_approx.

Visualize

doge_approxusingplt.imshow(). How has the image changed?

Try this again with values besides 20. Can you still tell what the original image was with only 10 singular values?

Still… so what?#

SVD is at the heart of one of our most confusing topics - principal component analysis. PCA involves carrying out SVD on our data matrix in order to reduce its dimensionality. That is, can we still have all the information we need in a data set while having fewer variables?