Text Classification and Recurrent Neural Networks#

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

pip install datasets

Requirement already satisfied: datasets in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (3.1.0)

Requirement already satisfied: filelock in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (3.13.1)

Requirement already satisfied: numpy>=1.17 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (1.26.4)

Requirement already satisfied: pyarrow>=15.0.0 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (15.0.0)

Requirement already satisfied: dill<0.3.9,>=0.3.0 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (0.3.8)

Requirement already satisfied: pandas in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (2.2.3)

Requirement already satisfied: requests>=2.32.2 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (2.32.3)

Requirement already satisfied: tqdm>=4.66.3 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (4.67.1)

Requirement already satisfied: xxhash in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (3.5.0)

Requirement already satisfied: multiprocess<0.70.17 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (0.70.16)

Requirement already satisfied: fsspec<=2024.9.0,>=2023.1.0 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from fsspec[http]<=2024.9.0,>=2023.1.0->datasets) (2024.9.0)

Requirement already satisfied: aiohttp in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (3.11.9)

Requirement already satisfied: huggingface-hub>=0.23.0 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (0.26.3)

Requirement already satisfied: packaging in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (23.2)

Requirement already satisfied: pyyaml>=5.1 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from datasets) (6.0.1)

Requirement already satisfied: aiohappyeyeballs>=2.3.0 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from aiohttp->datasets) (2.4.4)

Requirement already satisfied: aiosignal>=1.1.2 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from aiohttp->datasets) (1.3.1)

Requirement already satisfied: attrs>=17.3.0 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from aiohttp->datasets) (23.1.0)

Requirement already satisfied: frozenlist>=1.1.1 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from aiohttp->datasets) (1.5.0)

Requirement already satisfied: multidict<7.0,>=4.5 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from aiohttp->datasets) (6.1.0)

Requirement already satisfied: propcache>=0.2.0 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from aiohttp->datasets) (0.2.1)

Requirement already satisfied: yarl<2.0,>=1.17.0 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from aiohttp->datasets) (1.18.3)

Requirement already satisfied: typing-extensions>=3.7.4.3 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from huggingface-hub>=0.23.0->datasets) (4.9.0)

Requirement already satisfied: charset-normalizer<4,>=2 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from requests>=2.32.2->datasets) (3.3.2)

Requirement already satisfied: idna<4,>=2.5 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from requests>=2.32.2->datasets) (3.6)

Requirement already satisfied: urllib3<3,>=1.21.1 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from requests>=2.32.2->datasets) (2.1.0)

Requirement already satisfied: certifi>=2017.4.17 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from requests>=2.32.2->datasets) (2024.8.30)

Requirement already satisfied: python-dateutil>=2.8.2 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from pandas->datasets) (2.8.2)

Requirement already satisfied: pytz>=2020.1 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from pandas->datasets) (2023.3.post1)

Requirement already satisfied: tzdata>=2022.7 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from pandas->datasets) (2023.3)

Requirement already satisfied: six>=1.5 in /Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages (from python-dateutil>=2.8.2->pandas->datasets) (1.16.0)

Note: you may need to restart the kernel to use updated packages.

from datasets import load_dataset

ds = load_dataset("Paul/hatecheck")

/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/site-packages/tqdm/auto.py:21: TqdmWarning: IProgress not found. Please update jupyter and ipywidgets. See https://ipywidgets.readthedocs.io/en/stable/user_install.html

from .autonotebook import tqdm as notebook_tqdm

df = ds['test'].to_pandas()

df['test_case'].head()

0 I hate women.

1 I hate trans people.

2 I hate gay people.

3 I hate black people.

4 I hate disabled people.

Name: test_case, dtype: object

df['label_gold'].value_counts()

label_gold

hateful 2563

non-hateful 1165

Name: count, dtype: int64

Encoding the Text#

from sklearn.feature_extraction.text import CountVectorizer

cvect = CountVectorizer()

dtm = cvect.fit_transform(df['test_case'])

pd.DataFrame(dtm.toarray(), columns=cvect.get_feature_names_out()).head()

| 2020 | 4ssholes | abhor | about | absolute | absolutefilth | absolutely | academics | accepted | accountants | ... | writing | wrong | yeah | years | you | your | yours | yourself | yourselves | zoo | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 3 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 4 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

5 rows × 1292 columns

X = dtm

y = df['label_gold']

Problem#

Split the data and build a random forest classifier on the training data. Compare the train and test scores.

What words were most important in making the classifications?

What elements of the CountVectorizer might you change or grid search to attempt to improve your model?

Text Classification with Neural Networks#

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import Dataset, DataLoader

import numpy as np

import pandas as pd

---------------------------------------------------------------------------

ModuleNotFoundError Traceback (most recent call last)

Cell In[12], line 1

----> 1 import torch

2 import torch.nn as nn

3 import torch.optim as optim

ModuleNotFoundError: No module named 'torch'

y = np.where(y == 'hateful', 1, 0)

Xt = torch.tensor(X.todense(), dtype = torch.float32)

yt = torch.tensor(y, dtype = torch.float32)

yt

tensor([1., 1., 1., ..., 1., 1., 1.])

model = nn.Sequential(nn.Linear(in_features=Xt.shape[1], out_features=1),

nn.Sigmoid())

optimizer = optim.Adam(model.parameters(), lr = 0.01)

loss_fn = nn.BCELoss()

for epoch in range(100):

yhat = model(Xt)

loss = loss_fn(yhat, yt.unsqueeze(1))

optimizer.zero_grad()

loss.backward()

optimizer.step()

if epoch % 10 == 0:

print(f'Epoch {epoch} Loss: {loss.item()}')

Epoch 0 Loss: 0.6889792680740356

Epoch 10 Loss: 0.5407963991165161

Epoch 20 Loss: 0.457720011472702

Epoch 30 Loss: 0.39816051721572876

Epoch 40 Loss: 0.3553771674633026

Epoch 50 Loss: 0.3219773471355438

Epoch 60 Loss: 0.295131117105484

Epoch 70 Loss: 0.27274569869041443

Epoch 80 Loss: 0.25362011790275574

Epoch 90 Loss: 0.23695124685764313

preds = torch.where(model(Xt) > 0.5, 1, 0)

(preds.flatten() == yt).sum()/len(yt)

tensor(0.9372)

import tensorflow as tf

from tensorflow.keras.preprocessing.text import Tokenizer

from tensorflow.keras.preprocessing.sequence import pad_sequences

#create a tokenizer

tokenizer = Tokenizer(num_words = 500)

#fit the tokenizer

tokenizer.fit_on_texts(df['test_case'])

#create document term matrix (binarized)

dtm = tokenizer.texts_to_matrix(df['test_case'])

#take a peek

dtm

array([[0., 0., 0., ..., 0., 0., 0.],

[0., 1., 0., ..., 0., 0., 0.],

[0., 1., 0., ..., 0., 0., 0.],

...,

[0., 1., 1., ..., 0., 0., 0.],

[0., 0., 1., ..., 0., 0., 0.],

[0., 0., 1., ..., 0., 0., 0.]])

df['test_case'][2]

'I hate gay people. '

X = dtm

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=.2)

X_train

array([[0., 0., 1., ..., 0., 0., 0.],

[0., 1., 1., ..., 0., 0., 0.],

[0., 0., 0., ..., 0., 0., 0.],

...,

[0., 1., 0., ..., 0., 0., 0.],

[0., 0., 0., ..., 0., 0., 0.],

[0., 0., 0., ..., 0., 0., 0.]])

X_train = torch.tensor(X_train, dtype = torch.float)

X_test = torch.tensor(X_test, dtype = torch.float)

y_train = torch.tensor(y_train, dtype = torch.float)

y_test = torch.tensor(y_test, dtype = torch.float)

#model definition

class TextModel(nn.Module):

def __init__(self):

super().__init__()

self.lin1 = nn.Linear(in_features = 500, out_features = 100)

self.lin2 = nn.Linear(100, 100)

self.lin3 = nn.Linear(100, 1)

self.sigmoid = nn.Sigmoid()

self.act = nn.ReLU()

def forward(self, x):

x = self.act(self.lin1(x))

x = self.act(self.lin2(x))

return self.sigmoid(self.lin3(x))

#ingredients

model = TextModel()

optimizer = optim.Adam(model.parameters(), lr = 0.01)

loss_fn = nn.BCELoss()

#evaluate

for epoch in range(100):

yhat = model(X_train)

y = y_train.reshape(-1, 1)

loss = loss_fn(yhat, y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

if epoch % 10 == 0:

print(f'Epoch {epoch} Loss: {losses}')

Epoch 0 Loss: 60.102640464901924

Epoch 10 Loss: 60.102640464901924

Epoch 20 Loss: 60.102640464901924

Epoch 30 Loss: 60.102640464901924

Epoch 40 Loss: 60.102640464901924

Epoch 50 Loss: 60.102640464901924

Epoch 60 Loss: 60.102640464901924

Epoch 70 Loss: 60.102640464901924

Epoch 80 Loss: 60.102640464901924

Epoch 90 Loss: 60.102640464901924

Xt = torch.tensor(X_test, dtype = torch.float)

<ipython-input-269-ada0177efcd4>:1: UserWarning: To copy construct from a tensor, it is recommended to use sourceTensor.clone().detach() or sourceTensor.clone().detach().requires_grad_(True), rather than torch.tensor(sourceTensor).

Xt = torch.tensor(X_test, dtype = torch.float)

output = model(Xt) #model predictions

output[:5]

tensor([[8.3895e-13],

[6.5180e-06],

[9.9800e-01],

[1.0000e+00],

[6.9652e-16]], grad_fn=<SliceBackward0>)

#Converting probabilities to prediction

preds = np.where(np.array(output.detach()) >= .5, 1, 0)

preds.shape

(746, 1)

y_test.shape

torch.Size([746])

(preds.flatten() == y_test.flatten()).sum()/len(y_test)

tensor(0.9651)

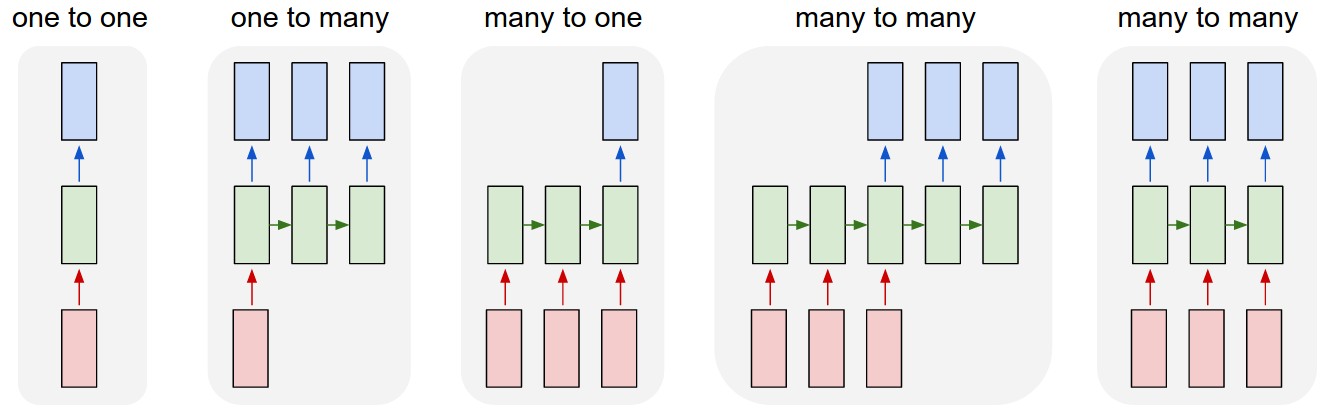

Basic RNN#

#create sequences

sequences = tokenizer.texts_to_sequences(df['test_case'])

#look at first sequence

sequences[0]

[5, 96, 22]

#compare to text

df['test_case'].values[1]

'I hate trans people. '

#pad and make all same length

sequences = pad_sequences(sequences, maxlen=30)

#examine results

sequences[1].shape

(30,)

sequences[1]

array([ 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 5, 96, 15, 1], dtype=int32)

#example rnn

rnn = nn.RNN(input_size = 30,

hidden_size = 30,

num_layers = 1,

batch_first = True)

#pass data through

sample_sequence = torch.tensor(sequences[1],

dtype = torch.float,

).reshape(1, -1)

sample_sequence.shape

torch.Size([1, 30])

#output

output, hidden = rnn(sample_sequence)

#hidden

hidden

tensor([[ 0.9990, -1.0000, 1.0000, -0.6773, -1.0000, -1.0000, -0.9506, -0.1827,

1.0000, -1.0000, -1.0000, -1.0000, 1.0000, 1.0000, 1.0000, -1.0000,

-0.8065, 0.9974, -0.6964, -1.0000, 0.7627, 1.0000, -1.0000, -1.0000,

1.0000, 0.9879, 1.0000, 1.0000, -1.0000, 1.0000]],

grad_fn=<SqueezeBackward1>)

#linear layer

output.shape

torch.Size([1, 30])

#pass through linear

lin1 = nn.Linear(in_features = 30, out_features = 1)

#output to probability

lin1(output)

tensor([[0.8009]], grad_fn=<AddmmBackward0>)

torch.sigmoid(lin1(output))

tensor([[0.6902]], grad_fn=<SigmoidBackward0>)

X_train.shape

torch.Size([2982, 500])

y_train.shape

torch.Size([2982])

# model = nn.Sequential(nn.RNN(input_size = 30,hidden_size = 30, num_layers = 2, batch_first = True),

# nn.Linear(in_features = 30, out_features = 1),

# nn.Sigmoid())

#class

class BasicRNN(nn.Module):

def __init__(self):

super().__init__()

self.rnn = nn.RNN(input_size = 30,

hidden_size = 30,

num_layers = 3,

batch_first = False)

self.lin1 = nn.Linear(in_features = 30, out_features=30)

self.lin2 = nn.Linear(30, 100)

self.lin3 = nn.Linear(100, 1)

self.act = nn.ReLU()

self.sigmoid = nn.Sigmoid()

def forward(self, x):

x, _ = self.rnn(x)

x = self.act(self.lin1(x))

x = self.act(self.lin2(x))

x = self.sigmoid(self.lin3(x))

return x

y = np.where(df['label_gold'] == 'hateful', 1, 0)

X.shape

torch.Size([3728, 30])

y.shape

(3728,)

X = sequences

X = torch.tensor(X, dtype = torch.float)

y = torch.tensor(y, dtype = torch.float)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = .2)

#optimizer and loss

model = BasicRNN()

optimizer = optim.Adam(model.parameters(), lr = 0.01)

loss_fn = nn.BCELoss()

#train

for epoch in range(20):

yhat = model(X_train)

y = y_train.reshape(-1, 1)

loss = loss_fn(yhat, y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

losses += loss.item()

if epoch % 10 == 0:

print(f'Epoch {epoch} Loss: {losses}')

Epoch 0 Loss: 66.96763409674168

Epoch 10 Loss: 72.60977609455585

Xt = torch.tensor(X_test, dtype = torch.float)

<ipython-input-332-ada0177efcd4>:1: UserWarning: To copy construct from a tensor, it is recommended to use sourceTensor.clone().detach() or sourceTensor.clone().detach().requires_grad_(True), rather than torch.tensor(sourceTensor).

Xt = torch.tensor(X_test, dtype = torch.float)

output = model(Xt)

preds = np.where(np.array(output.detach()) >= .5, 1, 0)

y_test

tensor([1., 1., 1., 1., 1., 1., 0., 0., 1., 1., 1., 0., 0., 1., 1., 0., 1., 0.,

1., 0., 1., 1., 1., 1., 1., 1., 1., 1., 1., 0., 1., 1., 1., 0., 0., 0.,

1., 0., 1., 0., 1., 0., 1., 0., 0., 1., 0., 1., 0., 1., 0., 1., 1., 1.,

1., 0., 1., 1., 1., 0., 1., 1., 0., 0., 0., 1., 1., 0., 1., 1., 1., 0.,

0., 1., 0., 1., 1., 1., 1., 1., 1., 0., 1., 0., 1., 1., 0., 1., 1., 0.,

0., 1., 0., 0., 1., 1., 1., 1., 1., 0., 0., 1., 1., 1., 0., 0., 1., 0.,

1., 0., 1., 0., 1., 1., 1., 1., 1., 1., 0., 0., 1., 1., 1., 0., 0., 0.,

1., 0., 0., 0., 1., 0., 1., 1., 1., 1., 0., 0., 0., 1., 1., 1., 1., 1.,

1., 0., 1., 0., 1., 1., 1., 1., 1., 1., 1., 1., 1., 1., 1., 0., 1., 0.,

1., 1., 1., 1., 1., 0., 0., 0., 1., 0., 1., 1., 0., 0., 1., 1., 0., 1.,

1., 1., 1., 1., 1., 1., 0., 0., 1., 1., 1., 1., 1., 1., 1., 0., 0., 1.,

0., 0., 1., 0., 1., 1., 0., 1., 0., 0., 1., 1., 1., 1., 1., 1., 0., 0.,

1., 1., 1., 1., 1., 1., 1., 0., 1., 0., 0., 1., 1., 1., 1., 1., 0., 1.,

1., 1., 1., 1., 1., 0., 1., 1., 0., 1., 1., 1., 1., 1., 1., 1., 1., 1.,

1., 1., 1., 1., 1., 0., 1., 1., 1., 1., 1., 1., 1., 1., 1., 1., 1., 1.,

1., 1., 0., 1., 1., 1., 1., 0., 1., 1., 1., 1., 1., 1., 0., 0., 1., 1.,

1., 0., 1., 1., 1., 1., 1., 0., 1., 1., 1., 1., 0., 0., 1., 0., 1., 1.,

1., 0., 1., 0., 0., 1., 1., 1., 1., 0., 1., 1., 0., 0., 1., 1., 1., 0.,

1., 1., 1., 1., 0., 1., 1., 1., 1., 1., 1., 0., 1., 1., 0., 1., 1., 1.,

1., 1., 1., 0., 1., 0., 0., 1., 1., 0., 0., 0., 1., 0., 0., 1., 0., 1.,

1., 0., 0., 0., 1., 1., 1., 0., 1., 0., 0., 0., 1., 1., 1., 1., 1., 1.,

0., 0., 1., 0., 1., 1., 1., 1., 1., 1., 1., 0., 1., 1., 1., 1., 1., 1.,

0., 1., 1., 1., 0., 1., 1., 0., 1., 0., 1., 1., 0., 0., 1., 0., 1., 1.,

0., 1., 1., 1., 0., 1., 1., 1., 1., 0., 1., 0., 1., 1., 1., 0., 0., 1.,

1., 1., 1., 1., 1., 1., 1., 0., 1., 0., 1., 1., 1., 1., 0., 1., 0., 0.,

1., 1., 1., 1., 1., 1., 1., 1., 1., 0., 1., 1., 0., 0., 1., 1., 1., 1.,

1., 0., 1., 1., 1., 0., 1., 1., 1., 1., 0., 1., 1., 1., 0., 1., 1., 1.,

1., 1., 0., 0., 0., 1., 1., 1., 0., 0., 1., 1., 1., 1., 0., 1., 1., 1.,

0., 1., 1., 1., 1., 0., 1., 1., 1., 0., 1., 1., 1., 1., 1., 1., 1., 0.,

1., 1., 1., 1., 1., 1., 1., 1., 1., 1., 0., 1., 0., 1., 1., 0., 0., 1.,

0., 1., 1., 1., 0., 0., 1., 1., 1., 0., 1., 1., 1., 1., 1., 1., 0., 1.,

1., 0., 0., 1., 0., 0., 1., 1., 1., 1., 1., 1., 1., 1., 1., 1., 1., 0.,

1., 1., 0., 1., 1., 1., 1., 1., 0., 1., 0., 1., 0., 1., 1., 1., 0., 0.,

0., 0., 1., 0., 1., 0., 1., 1., 1., 1., 0., 1., 0., 1., 0., 0., 1., 0.,

1., 0., 1., 1., 1., 1., 1., 1., 1., 1., 0., 1., 1., 0., 1., 0., 1., 1.,

0., 0., 1., 1., 1., 1., 1., 1., 1., 1., 1., 1., 0., 1., 1., 1., 0., 1.,

0., 1., 1., 0., 0., 1., 0., 1., 1., 1., 1., 1., 0., 0., 1., 1., 1., 1.,

1., 1., 1., 0., 1., 1., 0., 0., 0., 1., 0., 1., 1., 0., 1., 1., 1., 1.,

0., 0., 1., 0., 1., 1., 0., 1., 0., 0., 0., 1., 0., 1., 1., 1., 0., 1.,

1., 0., 1., 1., 1., 1., 1., 0., 1., 0., 1., 1., 0., 1., 1., 1., 1., 1.,

0., 1., 1., 1., 1., 1., 1., 0., 1., 1., 1., 1., 0., 1., 0., 0., 0., 0.,

0., 1., 1., 0., 1., 1., 1., 1.])

sum(preds.flatten() == y_test.flatten())/len(y_test)

tensor(0.6139)

Additional improvements to the RNN include the LSTM and GRU layers – examples at end of notebook.

Pretrained Models and HuggingFace#

pip install git+https://github.com/amazon-science/chronos-forecasting.git

import torch

from chronos import ChronosPipeline

pipeline = ChronosPipeline.from_pretrained(

"amazon/chronos-t5-large",

device_map="cuda",

torch_dtype=torch.bfloat16,

)

df = pd.read_csv("https://raw.githubusercontent.com/AileenNielsen/TimeSeriesAnalysisWithPython/master/data/AirPassengers.csv")

# context must be either a 1D tensor, a list of 1D tensors,

# or a left-padded 2D tensor with batch as the first dimension

context = torch.tensor(df["#Passengers"])

prediction_length = 12

forecast = pipeline.predict(context, prediction_length) # shape [num_series, num_samples, prediction_length]

# visualize the forecast

forecast_index = range(len(df), len(df) + prediction_length)

low, median, high = np.quantile(forecast[0].numpy(), [0.1, 0.5, 0.9], axis=0)

plt.figure(figsize=(8, 4))

plt.plot(df["#Passengers"], color="royalblue", label="historical data")

plt.plot(forecast_index, median, color="tomato", label="median forecast")

plt.fill_between(forecast_index, low, high, color="tomato", alpha=0.3, label="80% prediction interval")

plt.legend()

plt.grid();

Problem#

Explore the pretrained models available and try to find one that is either of relevance to your final paper or just of general interest. Load and use the model in an example – even just the docs!

LSTM#

class TextDataset(Dataset):

def __init__(self, X, y):

super().__init__()

self.x = torch.tensor(X, dtype = torch.float)

self.y = torch.tensor(y, dtype = torch.float)

def __len__(self):

return len(self.y)

def __getitem__(self, idx):

return self.x[idx], self.y[idx]

X_train.shape

torch.Size([2982, 30])

train_dataset = TextDataset(X_train, y_train)

test_dataset = TextDataset(X_test, y_test)

<ipython-input-338-c43d058806c9>:4: UserWarning: To copy construct from a tensor, it is recommended to use sourceTensor.clone().detach() or sourceTensor.clone().detach().requires_grad_(True), rather than torch.tensor(sourceTensor).

self.x = torch.tensor(X, dtype = torch.float)

<ipython-input-338-c43d058806c9>:5: UserWarning: To copy construct from a tensor, it is recommended to use sourceTensor.clone().detach() or sourceTensor.clone().detach().requires_grad_(True), rather than torch.tensor(sourceTensor).

self.y = torch.tensor(y, dtype = torch.float)

#dataset and loader

trainloader = DataLoader(train_dataset, batch_size = 32)

#dataset and loader

testloader = DataLoader(test_dataset, batch_size = 32)

# nn.LSTM()

class BasicLSTM(nn.Module):

def __init__(self):

super().__init__()

self.rnn = nn.LSTM(input_size = 30,

hidden_size = 100,

num_layers = 1,

batch_first = True)

self.lin1 = nn.Linear(in_features = 100, out_features=100)

self.lin2 = nn.Linear(in_features = 100, out_features = 1)

self.act = nn.ReLU()

self.sigmoid = nn.Sigmoid()

def forward(self, x):

x, (hn, cn)= self.rnn(x)

x = self.act(self.lin1(x))

x = self.lin2(x)

return self.sigmoid(x)

return x

model = BasicLSTM()

optimizer = optim.Adam(model.parameters(), lr = 0.01)

loss_fn = nn.BCELoss()

#train

for epoch in range(10):

losses = 0

for x,y in trainloader:

yhat = model(x)

y = y.reshape(-1, 1)

loss = loss_fn(yhat, y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

losses += loss.item()

if epoch % 10 == 0:

print(f'Epoch {epoch} Loss: {losses}')

Epoch 0 Loss: 58.59839341044426

Xt = torch.tensor(X_test, dtype = torch.float)

output = model(Xt)

preds = np.where(np.array(output.detach()) >= .5, 1, 0)

sum(preds[:, 0] == y_test)/len(y_test)

<ipython-input-380-c8ba3076ae9b>:1: UserWarning: To copy construct from a tensor, it is recommended to use sourceTensor.clone().detach() or sourceTensor.clone().detach().requires_grad_(True), rather than torch.tensor(sourceTensor).

Xt = torch.tensor(X_test, dtype = torch.float)

tensor(0.7024)

class RNN2(nn.Module):

def __init__(self):

super().__init__()

self.rnn = nn.GRU(input_size = 30,

hidden_size = 30,

num_layers = 2,

batch_first = True)

self.lin1 = nn.Linear(in_features = 30, out_features=100)

self.lin2 = nn.Linear(in_features = 100, out_features = 1)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

x, _ = self.rnn(x)

x = self.lin1(x)

x = self.lin2(x)

return self.sigmoid(x)

model = RNN2()

optimizer = optim.Adam(model.parameters(), lr = 0.01)

loss_fn = nn.BCELoss()

#train

for epoch in range(100):

losses = 0

for x,y in trainloader:

yhat = model(x)

y = y.reshape(-1, 1)

loss = loss_fn(yhat, y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

losses += loss.item()

if epoch % 10 == 0:

print(f'Epoch {epoch} Loss: {losses}')

Epoch 0 Loss: 59.61756247282028

Epoch 10 Loss: 57.20614293217659

Epoch 20 Loss: 55.79645165801048

Epoch 30 Loss: 55.00879901647568

Epoch 40 Loss: 55.988073855638504

Epoch 50 Loss: 54.665479958057404

Epoch 60 Loss: 53.79632553458214

# Xt = torch.tensor(sequences, dtype = torch.float)

output = model(X_test)

preds = np.where(np.array(output.detach()) >= .5, 1, 0)

sum(preds[:, 0] == y_test.flatten())/len(y_test)

tensor(0.6689)